New MIT Research Shows Spectacular Increase In White Collar Productivity From ChatGPT

Two Economics PhD candidates at MIT just published a fascinating study of the impact of ChatGPT on white collar productivity. And the results are pretty spectacular. (Note this is not peer reviewed yet.)

The team asked 444 white collar workers to do writing and editing tasks along the lines of marketing, grant writing, data analysis, and human resources and then split the group into two: one that used ChatGPT and one that did not. After doing 20-30 minute assignments of work they consider representative of these functional areas, their work was “graded” by evaluators who work in these job areas. The team looked at speed of result, quality of result, and the actual role ChatGPT played (did it replace, augment, or confuse work).

The results are fairly spectacular.

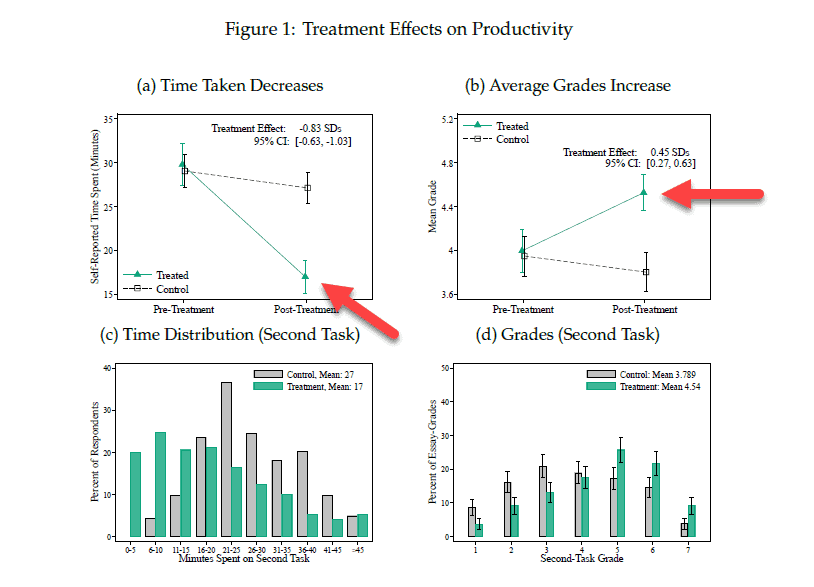

The ChatGPT using group was 37% faster at completing tasks (17 minutes to complete vs. 27 minutes) with roughly similar grades (level of quality), and as the workers repeated their tasks for improvement the ChatGPT groups quality went up significantly faster. In other words, ChatGPT did make work speedier with no sacrifice in quality and then made it easier to “improve work quickly” using the tool.

|

The researchers went further: they asked the participants to complete a certain amount of work in a fixed time, and also showed that “production volume” increased while quality of work remained fairly constant.

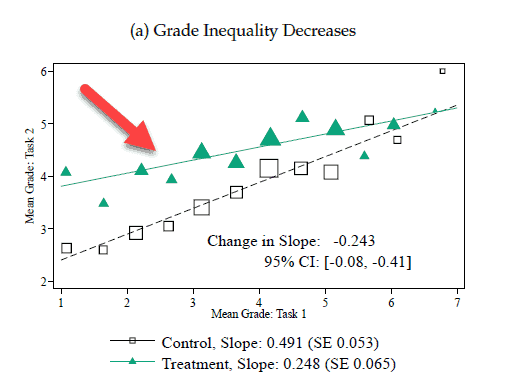

Then they asked the participants to “iterate” on their work to improve quality and once again the ChatGPT group outperformed their peers. As this chart shows the aided team scores higher quality at the outset and after multiple iterations the two groups start to come together. This is true despite the fact that 68% of the ChatGPT group submitted results from only one query, essentially saying that ChatGPT is dramatically reducing effort (ie. people are not iterating a lot to get a better and better answer).

|

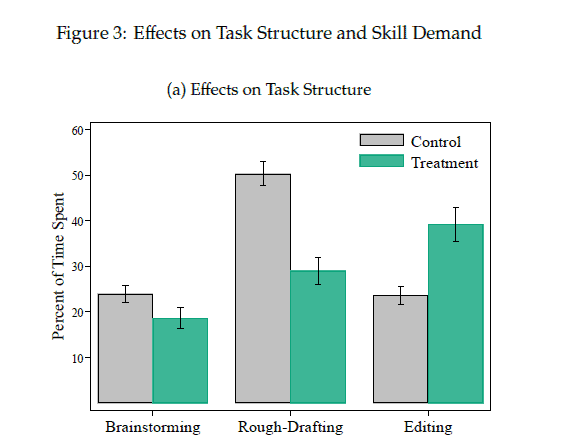

How does ChatGPT generate these amazing results? Well the team also asked people “what they used ChatGPT for” and found the following. The tool somewhat reduces brainstorming, greatly reduces rough draft creation, but is then more actively used during the final editing process. In other words this is a system that greatly speeds up the “first draft” and “initial findings” part of the work, then to be used slightly more intensely for final draft.

|

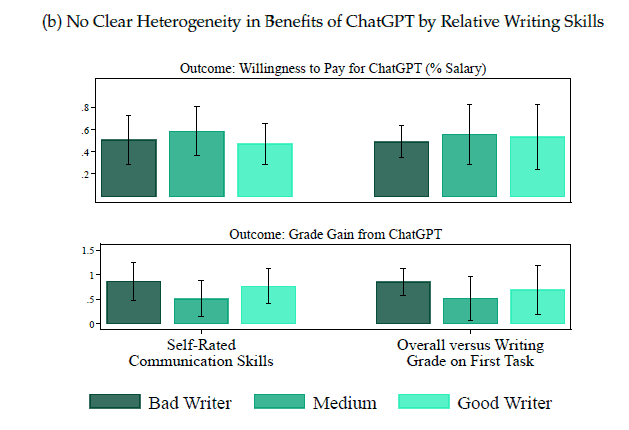

And it gets even better. When they asked respondents their own self-evaluated skill at writing, the “willingness to pay” and “value received” is almost identical for “bad writers” and “good writers.” In other words, ChatGPT helps “bad writers” get good and helps “good writers” go faster and possibly get better!

And here’s the most astounding finding of all. The respondents who used ChatGPT told the researchers they were willing to pay a monthly fee of .5% of their salary to access this tool! For a worker earning $100,000 a year this equates to nearly $500 per month to use this system. (Microsoft has a lot of business opportunity here.)

|

Implications Of This Research

I have been using ChatGPT and Bing Chat for several weeks now and yes, it is enormously useful for many things. While its accuracy must be checked (it sometimes does find old data or misrepresent data, as the 60 Minutes show pointed out), it is enormously valuable for finding complex information, putting comparisons together (ie. compare Microsoft’s financials from 2021 to 2022), and creating summaries of complex data (ie. “What does Josh Bersin do for a living?”). I have also seen vendors use it to generate learning plans, management coaching tips, and Q&A systems (a la teaching assistants).

And with Microsoft’s announcement this week of Co-Pilots and development tools to build Generative AI chatbots for business apps and internal data, you can create these kinds of benefits for your own company.

But there’s a much bigger story here. For some reason the New York Times and other journals seem to be filled with articles about the “dangers” of AI. I won’t mention names but there are many outspoken experts who claim these tools are going to be used for misinformation, lies, and general world havoc.

While I fully understand their worries, I am left with the following thought. I would imagine that people had similar worries when the first printing press, pencil, and paper were created. These were “tools for information warfare” just as social networks, blogs, and social video sites have been in the last decade. Every technology for communication (the phone, the digital phone, the video camera, and all forms of written communication) have enabled nefarious (and sloppy) people to distribute misleading information. One could argue that the entire adtech industry is built to facilitate this.

This tool, which statistically finds and organizes massive amounts of text (and graphical) information, is very much the same. Any risks or errors these tools create will become the responsibility (and liability) of the providers. So companies that use these tools, offer information services, and certify their accuracy will be legally responsible for their behavior. The “tool creators” like OpenAI and others will have no incentive for their tools to be “incorrect” or “faulty” so they will improve them.

In other words, in my opinion (and I am not an attorney), these systems look to be as important to the world as the pencil, the printing press, and the digital camera. Let’s not confuse “how they’re used” with “what they do” and their very early stage of development.

To me this study confirms my belief that Generative AI and ChatGPT in particular has an enormously positive role to play in our business and personal lives in the future. It’s up to us, as users, to learn to use it for good.

Additional Information

Microsoft Launches OpenAI CoPilots For Dynamics Apps And The Enterprise.

Fighting ‘Woke AI,’ Musk Recruits Team to Develop OpenAI Rival

Mark Zuckerberg announces Meta’s new large language model as A.I. race heats up

Understanding Chat-GPT, And Why It’s Even Bigger Than You Think (*updated)

What Is A Large Language Model (LLM) Anyway? (good overview)

Why Microsoft’s Investment in OpenAI Threatens Google (Fortune)

Listen to Satya Nadella Describe Microsoft’s View of OpenAI